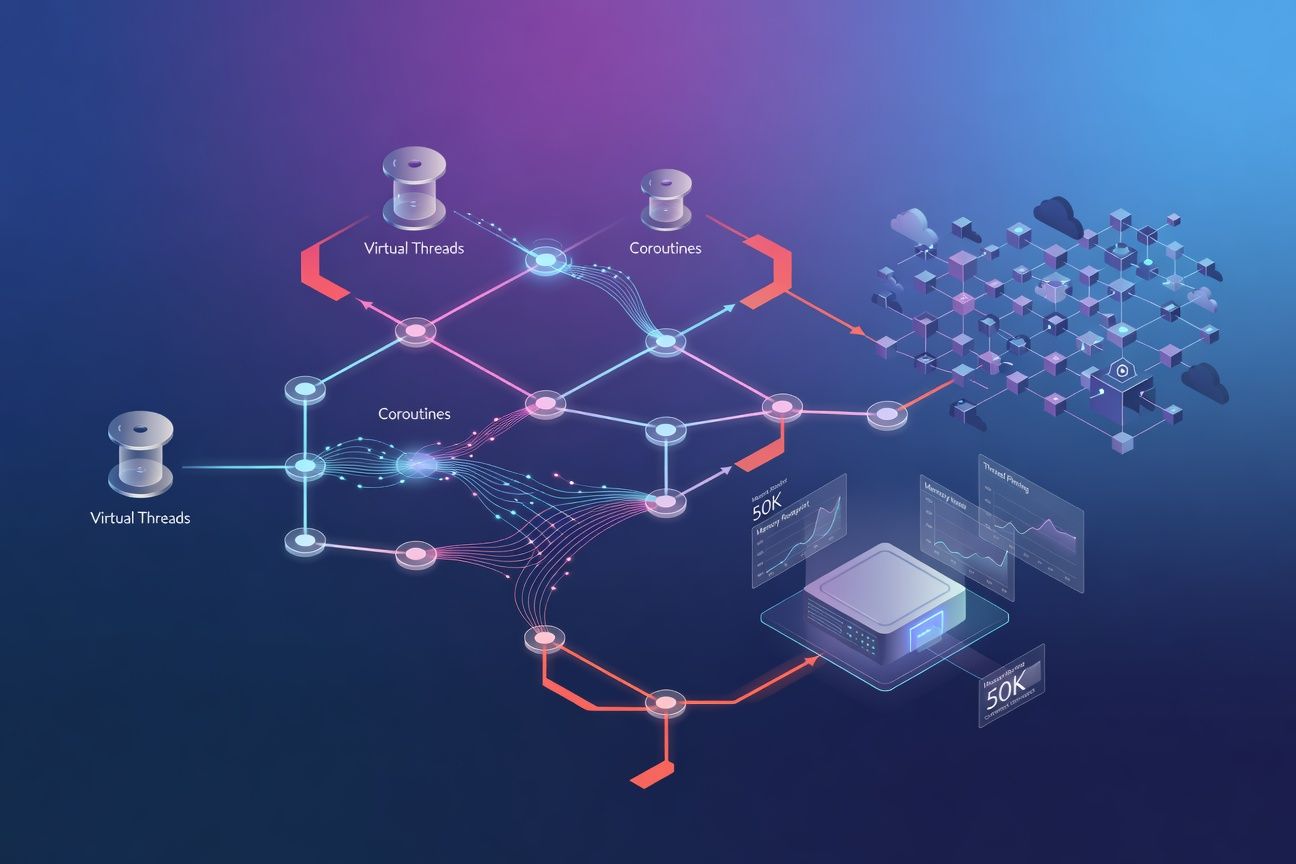

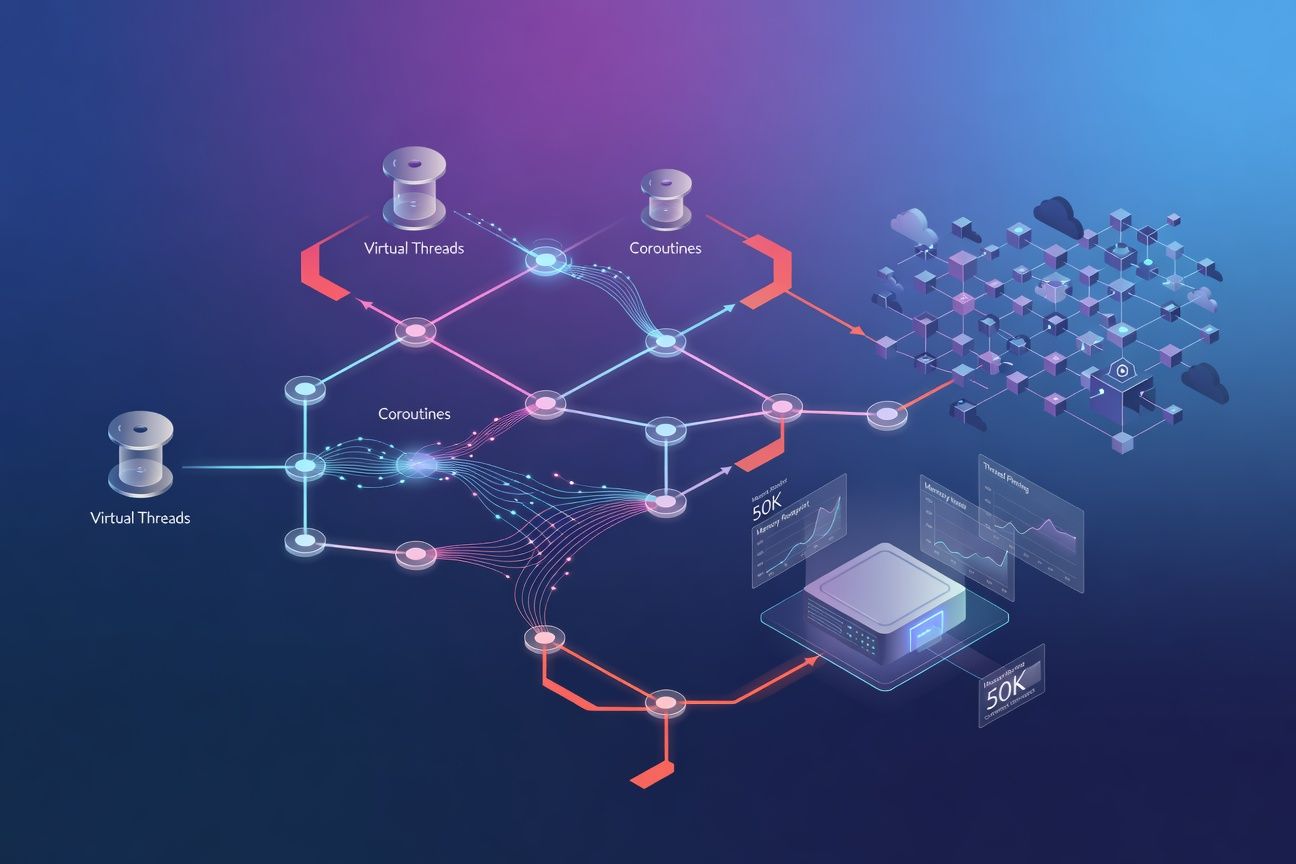

Ktor at 50K connections: coroutines vs virtual threads

Meta description: Benchmark Ktor coroutines vs JVM virtual threads at 50K connections. See why a $20 VPS can outperform Kubernetes for early-stage startups.

Tags: kotlin, backend, startup, architecture, cloud

TL;DR

Ktor with coroutines handles 50K concurrent connections on a single 4-core VPS using ~1.2 GB of heap. Virtual threads (Project Loom) get you there too, but with 2-3x more memory overhead and subtle thread-pinning traps when mixed with Kotlin’s synchronized blocks. For startups under 100K DAU, a properly configured Ktor coroutine server on a $20 VPS outperforms a poorly configured Kubernetes cluster and costs an order of magnitude less to operate.

Stop over-engineering your infrastructure

Every hour your two-person startup spends debugging Kubernetes networking or tuning pod autoscaling is an hour not spent on the product. The infrastructure should disappear, and with modern Ktor, it can.

Most teams adopt Kubernetes at 500 DAU because “we’ll need it eventually.” You won’t. Not for a long time.

Benchmark methodology

All figures in this post come from load tests run with k6 against a Ktor 2.3.x / JDK 21 server on a Hetzner CPX31 (4 vCPU AMD, 8 GB RAM, Ubuntu 22.04). The test profile held 50K concurrent WebSocket connections idle with a keep-alive ping every 30 seconds, plus a sustained 2K RPS of JSON GET requests. OS tuning: ulimit -n 131072, net.core.somaxconn=65535, net.ipv4.ip_local_port_range=1024 65535. Memory was measured as RSS via ps and heap via JMX’s MemoryMXBean after a 10-minute steady state with ZGC.

Virtual threads vs coroutines: the real tradeoffs

With JDK 21+ now mainstream, Ktor gives you two high-concurrency models: its native coroutine dispatcher and the new virtual thread executor. Both are lightweight compared to platform threads, but they diverge in ways that matter.

Memory per connection

| Metric | Coroutines (Dispatchers.IO) | Virtual Threads (Loom) | Platform Threads |

|---|---|---|---|

| Stack memory per task | ~256 bytes-few KB (heap) | ~1-2 KB (resizable stack) | ~1 MB (fixed stack) |

| 50K idle connections (RSS) | ~1.2 GB | ~2.5 GB | ~50 GB (infeasible) |

| Context switch cost | Continuation resume (ns) | Carrier mount/unmount (us) | Full OS context switch (us) |

| GC pressure | Lower (object-based) | Higher (stack frames on heap) | N/A |

Coroutines win on raw memory efficiency because they’re compiler-transformed state machines allocated on the heap. No stack frames at all until they resume. Virtual threads carry a growable stack, far lighter than platform threads but still measurably heavier than a suspended coroutine.

The thread-pinning trap

This one bites people: synchronized blocks pin virtual threads to carrier threads. In Kotlin, the compiler generates synchronized in places you might not expect: lazy delegates, certain companion object initializations, and some coroutine internals.

// This PINS a virtual thread to its carrier

val cached: ExpensiveResource by lazy {

// Under the hood: synchronized block

loadFromDatabase()

}

// Safe alternative: suspend-aware double-checked lock

private val mutex = Mutex()

private var cached: ExpensiveResource? = null

suspend fun getResource(): ExpensiveResource {

cached?.let { return it }

return mutex.withLock {

cached ?: loadFromDatabase().also { cached = it }

}

}The Mutex-guarded pattern avoids the race condition of a naive AtomicReference check-then-set. Only one coroutine enters loadFromDatabase(), and all others see the initialized value. With coroutines on Dispatchers.IO, pinning isn’t a problem at all, but this pattern is still good hygiene.

Structured concurrency is the real win

get("/dashboard/{userId}") {

val (profile, metrics) = coroutineScope {

val p = async { userService.getProfile(userId) }

val m = async { analyticsService.getMetrics(userId) }

p.await() to m.await()

}

call.respond(DashboardResponse(profile, metrics))

}If the client disconnects, both coroutines cancel automatically. With virtual threads, you wire up ExecutorService shutdown logic manually. Doable, but boilerplate that Ktor already solved. JEP 453 (Structured Concurrency) aims to close this gap for virtual threads, but it’s still in preview as of JDK 23.

The $20 VPS configuration that works

I’ve built production mobile backends on this setup, and this configuration handles 50K concurrent connections on the Hetzner CPX31:

embeddedServer(Netty, port = 8080) {

install(ContentNegotiation) { json() }

install(Compression) { gzip() }

}.start(wait = true)-XX:+UseZGC -XX:MaxRAMPercentage=75.0Netty’s default pipeline handles high connection counts once the OS limits are raised. If you need an explicit ceiling, add a ChannelHandler that tracks active connections and rejects beyond your threshold. There’s no built-in Ktor property for this. The real bottleneck is always ulimit -n and somaxconn at the OS level.

Cost comparison

| Setup | Monthly Cost | What You Get |

|---|---|---|

| Hetzner CPX31 (4 vCPU, 8 GB) | ~$20 | Single-node, 50K connections proven |

| GKE Autopilot (2 e2-medium nodes) | ~$150 | Control plane + compute + egress |

| EKS (control plane + 2 t3.medium) | ~$190 | $73 control plane + ~$60/node |

That’s a 7-10x cost difference. For a typical mobile backend (REST endpoints returning JSON, polling health checks, push notification registration) the single VPS handles the load cleanly under 100K DAU.

When you actually need Kubernetes

I don’t want to oversell the single-VPS approach. Kubernetes solves real problems: automated health-check restarts, rolling zero-downtime deploys, secrets management, centralized observability. You need it when you have multiple independently deployable services or traffic that demands horizontal autoscaling beyond a single node.

On a single VPS, you fill these gaps with proven tools: systemd watchdog for auto-restart, blue-green deploys via nginx upstream switching, and journald + Vector for structured log shipping. It’s scrappier, but it works until you hit product-market fit and the revenue justifies the infrastructure leap.

What to do with all this

-

Default to Ktor coroutines over virtual threads. Memory efficiency is measurably better, structured concurrency is built in, and you sidestep thread-pinning bugs from

synchronizedin Kotlin’s standard library. -

Delay Kubernetes until you exceed single-node capacity. A $20 VPS with ZGC and Ktor’s Netty engine handles 50K concurrent connections at 7-10x less cost than managed K8s. Measure your actual DAU first.

-

If you do use virtual threads, audit for

synchronized. ReplacelazywithMutex-guarded initialization, avoidReentrantLockin hot paths, and run staging with-Djdk.tracePinnedThreads=shortto detect pinning before production.

Further reading: JEP 453: Structured Concurrency (Preview) tracks how virtual threads are closing the structured concurrency gap that coroutines handle today.